|

4/27/2023 0 Comments Online morse decoder audio

Takes the FFT to create a spectrogram of the given audio signal Model = Model(open("morseCharList.txt").read(), config, decoderType = DecoderType.BestPath, mustRestore=True) (recognized, probability) = model.inferBatch(batch, True) Overlap = nfft-56 # overlap value for spectrogram

SAMPLES_PER_FRAME = 4 #Number of mic reads concatenated within a single window # Import Modules #įrom morse.MorseDecoder import Config, Model, Batch, DecoderType Real time Morse decoder using CNN-LSTM-CTC Tensorflow model SAMPLE_LENGTH = int(CHUNK_SIZE*1000/RATE) #length of each sample in ms

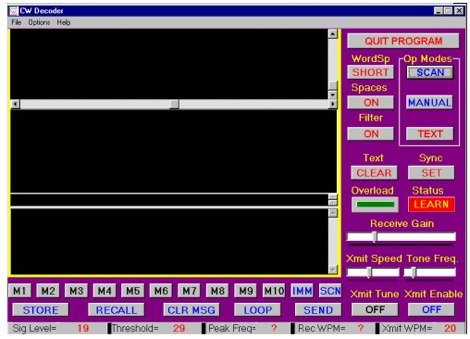

I used the following constants in mic_read.py.įORMAT = pyaudio.paInt16 #conversion format for PyAudio streamĬHUNK_SIZE = 8192 #number of samples to take per read I adapted the main Spectogram loop from this Github repo. The python script running the model is quite simple and listed below. The current model doesn't know about the "Space" character so it is just decoding what it has been trained on. The final problem is the lack of spaces between the words. I believe that the current model doesn't have enough examples to decode all the numbers correctly. The ARRL training material that I used to build the corpus for training has about 8.6% words that are numbers (such as bands, frequencies and years). The decoder has also problems with some numbers even when fully visible in the 128x32 image frame. This means that a single Morse character is moving out of the frame but some part of the character can be still visible, causing incorrect decodes. The scrolling 128x32 image that is produced from the spectrogram graph does not have any smarts - it is just copied at every update cycle and fed into the infer_image() function. It has visible problems when the current image frame cuts the Morse character into parts. The new deep learning Morse decoder is also able to decode the audio with probabilities ranging from 4% to over 90% during this period. THAT BAND STARTED ATĪs can be seen from the YouTube video FLDIGI is able to copy this CW quite well. SQUEEZING IN FIVE BANDS SACRIFICED PART OF 80 METERS. BECAUSE THE 210 USED THE SAME VFO AND BAND SWITCH AS THE 180, METERS HEREAFTER, WHEN THE 210 SERIES IS MENTIONED, THE 215 IS ALSO THE NEWĢ10 COVERED 80 10 METERS, WHILE THE OTHERWISE IDENTICAL 215 COVERED 160 15 A PAIR OF SUCCESSORS EARLY IN 1975,ĪTLAS INTRODUCED THE 180S SUCCESSOR IN REALITY, A PAIR OF THEM. MODULATION, THE 180S RECEIVER HAD NO RF AMPLIFIER STAGE THE ANTENNA INPUTĬIRCUIT FED THE RADIOS MIXER DIRECTLY. IN ORDER TO IMPROVE IMMUNITY TO OVERLOAD AND CROSS REQUIRED TWO BAND SWITCH SEGMENTS TO COVER 75/80 METERS, BUT WAS AMPLE FOR SOLID STATE TRANSCEIVER FEATURED NO TUNE OPERATION. THATS NOTHING SPECIAL TODAY, BUT IT WAS A TINY RIG IN 1974. ANĪNALOG VFO FOR THE 160, 80, 40, AND 20 METER BANDS REPLACED THE SC 130S THE ATLAS 180 ADAPTED THE MAN PACK RADIOS DESIGN FOR AMATEUR USE. � NOW 30 WPM � TEXT IS FROM JULY 2015 QST PAGE 99 �ĪGREEMENT WITH SOUTHCOM GRANTED ATLAS ACCESS TO THE SC 130S TECHNOLOGY. The full text at 30 WPM is here - I have highlighted the text section that is playing in the above video clip. Finally, on the bottom right I have the 128x32 image frame that I am feeding to the model. On the bottom left I have Audacity playing a sample 30 WPM practice file from ARRL. The morse code is quite readable on this graph. On the top right I have the Spectrogram window that plots 4 seconds of the audio on a frequency scale. On the top middle I have console window open printing the frame number, CW tone frequency followed by "infer_image:" and decoded text as well as the probability that the model assigns to this result. Starting from the top left I have FLDIGI window open decoding CW at 30 WPM speed. I recorded this sample YouTube video below in order to document this experiment. With some tuning of the various components and parameters I was able to put together a working prototype using standard Python libraries and the Tensorflow Morse decoder that is available as open source in Github. I wanted to see if this new model is capable of decoding audio in real-time so I wrote a simple Python script to listen microphone, create a spectrogram, detect the CW frequency automatically, and feed 128 x 32 images to the model to perform the decoding inference. New real-time deep learning Morse decoder The training data corpus was created from ARRL Morse code practice files (text files). In this latest experiment I trained a new Tensorflow based CNN-LSTM-CTC model using 27.8 hours of Morse audio training set (25,000 WAV files - each clip 4 seconds) and achieved character error rate of 1.5% and word accuracy of 97.2% after 2:29:19 training time. This previous blog post covers the new approach of building Morse decoder by training a CNN-LSTM-CTC model using audio that is converted to small image frames. I have done some experiments with deep learning models previously.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed